Harness Engineering and the Art of Building Agents

The industry is waking up to what we built into Lawpath Cortex from day one: that the model is just the engine, and the harness is where the real engineering happens. OpenClaw, LangChain, and Anthropic are all converging on the same insight.

The Model Is Not the Agent

There’s a phrase making the rounds in AI engineering circles right now: Agent = Model + Harness. LangChain published it. Anthropic built an entire engineering blog series around it. And OpenClaw, the fastest-growing open-source AI agent framework in history, validated it so convincingly that its creator was hired by OpenAI within months of launch.

The idea is simple. A language model, no matter how capable, is not an agent. It becomes one only when surrounded by the right infrastructure: context assembly, tool orchestration, verification loops, memory systems, safety rails, and observability. That surrounding infrastructure is the harness.

What’s remarkable to us at Lawpath is that this framing describes exactly what we’ve been building for the past two years. When we designed Lawpath Cortex, the agentic infrastructure behind Atlas, we weren’t following a playbook called “harness engineering.” The playbook didn’t exist yet. But the problems were real, and the solutions we arrived at are strikingly similar to what the industry is now converging on.

This post is about that convergence: what harness engineering is, why it matters more than model selection, and how the patterns we built into Cortex anticipated the discipline before it had a name.

The Rise of Harness Engineering

The term “harness engineering” entered mainstream AI vocabulary in early 2026, but the concept has been building for over a year.

In June 2025, Shopify CEO Tobi Lutke popularised the idea that “context engineering” had superseded prompt engineering as the core skill for building with AI. Andrej Karpathy, formerly of OpenAI, defined it as “the delicate art and science of filling the context window with just the right information for the next step.” Anthropic published a detailed engineering guide on effective context engineering for AI agents, arguing that context is a finite resource with diminishing marginal returns, and that LLMs experience “context rot” as token count increases.

Harness engineering takes this further. Where context engineering focusses on what goes into the context window, harness engineering encompasses the entire system surrounding the model: how context is assembled, how tools are orchestrated, how outputs are verified, how state is maintained across sessions, and how the system degrades gracefully when things go wrong.

LangChain’s Vivek Trivedy codified the framework in “The Anatomy of an Agent Harness”, defining a harness as “every piece of code, configuration, and execution logic that isn’t the model itself.” The harness provides what raw models cannot: durable state, tool execution, real-time knowledge access, environment setup, and enforceable constraints.

The evidence that harness design matters more than model selection is now substantial:

-

SWE-Agent (Yang et al., NeurIPS 2024) demonstrated that designing a better agent-computer interface, without changing the underlying model, improved software engineering task resolution from 3.97% to 12.47% with GPT-4. Same model. Different harness. 64% better outcomes.

-

LangChain’s coding agent improved from 52.8% to 66.5% accuracy on TerminalBench 2.0 by changing only the harness components, keeping the model fixed. That’s a 26% improvement without touching the model.

-

OpenAI’s Codex team reported that swapping the underlying model shifts output quality by only 10-15%, while changing harness design determines whether the system works at all.

The emerging consensus: the model is commodity. The harness is moat.

OpenClaw: A Harness by Any Other Name

If you want to understand why harness engineering matters, look at OpenClaw.

Peter Steinberger created OpenClaw as a side project in late 2025, a simple experiment connecting an AI model to WhatsApp. Within months, it became the fastest-growing open-source project in AI history, reaching 100,000 GitHub stars by February 2026. By that point, Steinberger had joined OpenAI, and OpenClaw had been transferred to an independent foundation under the Linux Foundation umbrella.

What made OpenClaw explode wasn’t the model it used. OpenClaw is model-agnostic; it supports Claude, GPT, Gemini, and local models interchangeably. What made it resonate was everything around the model:

- Persistent identity and memory through structured files (SOUL.md, MEMORY.md, USER.md)

- Tool access via the Agent Control Protocol (ACP), which spawns specialised sub-agents for tasks like coding, research, and file management

- Multi-platform integration across Slack, Discord, WhatsApp, and other messaging surfaces

- Session management with both one-shot and persistent interaction modes

- Parallel orchestration of multiple agent sessions for complex workflows

As Attila Hajdrik wrote on Medium shortly after Steinberger’s move to OpenAI: “People weren’t showing up only because they wanted ‘agents.’ They showed up because they wanted a harness: something that makes advanced capability feel usable, repeatable, and safe.”

OpenClaw is, fundamentally, a harness. The model is a replaceable component. The value is in the orchestration, the memory, the tool integration, the session management, and the operational safety story. Strip away the harness and you’re left with an API call.

What Cortex Already Knew

When we read about harness engineering in 2026, we experienced a particular kind of recognition. Not surprise, but validation. The problems that harness engineering solves are the same problems we’ve been solving since we started building Cortex.

Here’s where the patterns align.

Context Assembly as Architecture

Anthropic’s context engineering guide describes the challenge of curating “the optimal set of all tokens during LLM inference, including system instructions, tools, external data, and message history.” They warn that context is finite, that more isn’t always better, and that agents need structured approaches to deciding what makes it into the window.

We built exactly this in Cortex’s Prompt Forge, our system prompt assembly architecture. Prompt Forge constructs prompts from standardised sections (identity, critical rules, user context, knowledge base, response framework, special handling) with explicit separation between static (cacheable) and dynamic content. The static layers, including legal knowledge, behavioural rules, and professional boundaries, are cached at the provider level. The dynamic layers, including your business profile, compliance status, and temporal context, are rebuilt fresh for every conversation.

We arrived at this not because someone told us to do “context engineering,” but because a single agent prompt had grown beyond 8,000 tokens and we needed a disciplined way to manage what went in and what stayed out. The LangChain harness framework now recommends the same separation. Anthropic’s guide warns about the same context rot we observed when we loaded too much customer history into a single window.

Model-Agnostic Orchestration

A core tenet of harness engineering is that the harness should be model-agnostic. OpenClaw demonstrated this by supporting every major model family interchangeably. The harness provides the structure; the model provides the generation.

Cortex has operated this way from the start. Our multi-model orchestration routes each interaction to the optimal model based on a complexity classifier. Simple factual questions go to lightweight models for speed. Complex legal analysis engages deeper reasoning models with extended thinking. Batch processing uses cost-optimised models without sacrificing quality. We orchestrate across multiple providers and model families, routing invisibly based on the task.

When a new model drops (and they drop constantly), we evaluate it, add it to the routing table, and the harness handles the rest. Our customers never notice the switch. They notice that Atlas keeps getting better.

Verification Loops and Quality Gates

The harness engineering literature emphasises self-verification as a critical component. LangChain describes evaluation gates. Anthropic’s multi-agent harness separates planning, generation, and evaluation into distinct agent roles.

We implemented this pattern through our web search quality gates: a three-phase system where query analysis feeds into domain-filtered search, then passes through quality assessment before reaching the user. If results don’t meet thresholds (minimum citation count, content length, authoritative source presence), the system retries with broader parameters. The domain registry maintains tiered authority, with .gov.au sources ranked highest.

In professional services, a confident wrong answer is worse than a slower right one. Our quality gates enforce that principle at the architecture level, not as a guideline, but as a hard constraint. This is what harness engineering calls “enforceable constraints,” and we built them because accuracy in legal contexts is non-negotiable.

Persistent Memory and Progressive Understanding

OpenClaw’s structured memory files (SOUL.md, MEMORY.md, USER.md) give agents persistent identity across sessions. This is one of the features that drove adoption: the feeling that the agent remembers you.

Cortex achieves this through progressive due diligence and the customer 360. When a business owner joins Lawpath, autonomous research agents build a structured profile from public data before the first interaction. Every subsequent touchpoint, including documents created, consultations booked, questions asked, and compliance events observed, enriches that profile through our faceted customer narratives, embedded in a vector database for semantic retrieval.

The result is identical in intent to OpenClaw’s memory system, but optimised for a specific domain: understanding Australian small businesses deeply enough to provide genuinely relevant legal and compliance guidance from the first interaction.

Tool Orchestration and Agentic Loops

The ReAct pattern (Reason, Act, Observe, Repeat) is now standard in harness engineering. Both OpenClaw’s ACP and LangChain’s agent framework implement variations of the same loop: the agent reasons about what it needs, calls a tool, observes the result, and decides what to do next.

Our tool-use agentic loop implements exactly this. When a business owner mentions hiring, the agent calls document search, checks existing agreements, retrieves award rates, and synthesises everything into a grounded response. Tool calls happen in a multi-round loop where the agent autonomously decides how to gather information. This is the same pattern, applied to a domain where accuracy has real consequences.

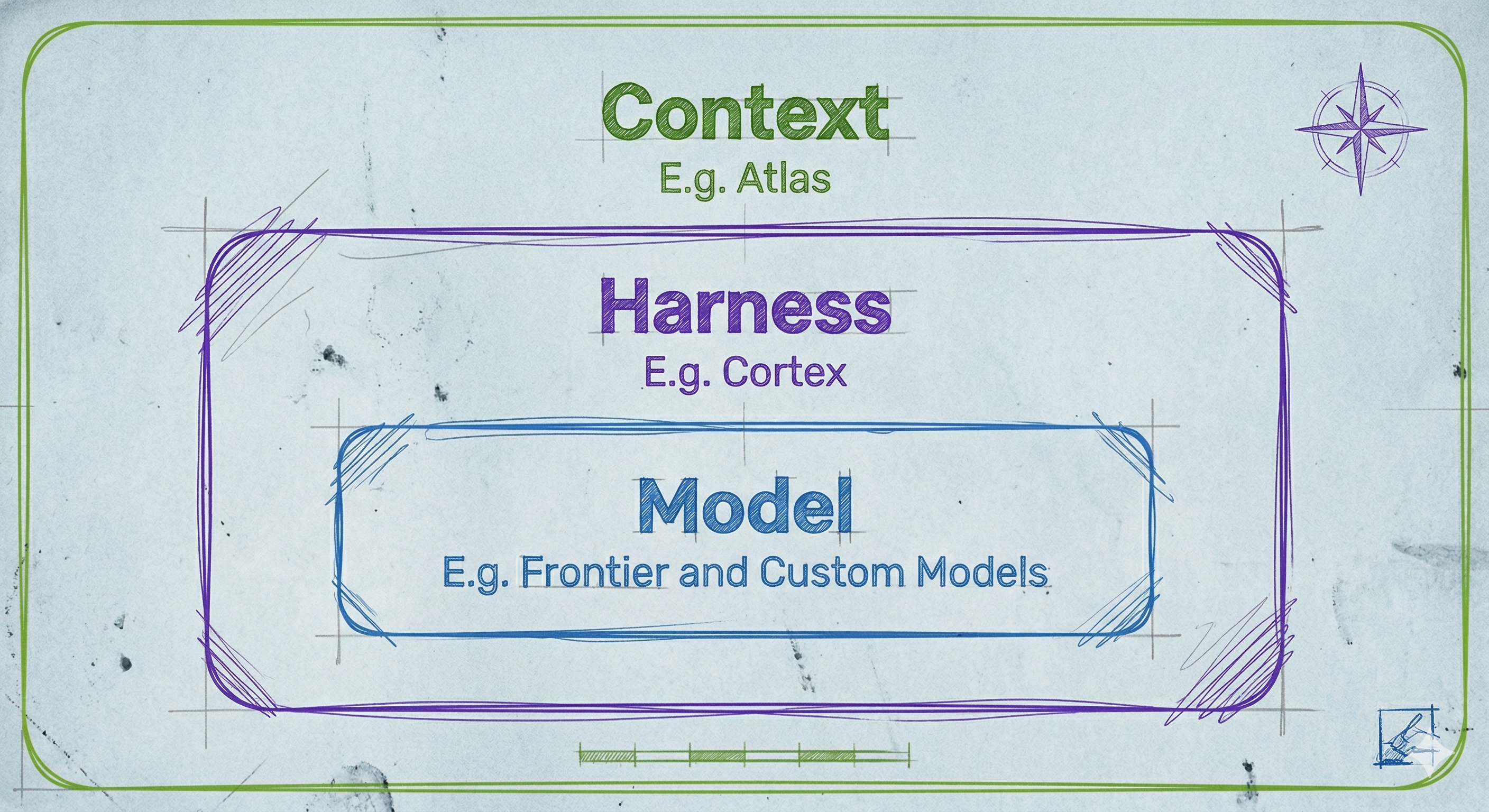

The Three Layers

A useful mental model for understanding where value actually lives in agent systems has three concentric layers:

The Model sits at the centre. It provides raw intelligence: text generation, reasoning, pattern recognition. Models are increasingly commodity. The gap between frontier models is narrowing. Swapping one for another changes output quality by 10-15%.

The Harness wraps the model. It provides context assembly, tool orchestration, verification, memory, and operational infrastructure. This is where the engineering discipline lives, and where most performance gains come from. Improving the harness while keeping the model fixed consistently delivers 25-65% improvements in task completion.

The Context wraps the harness. It provides domain knowledge, user understanding, regulatory awareness, and institutional memory. In our case, this is Australian legal knowledge, customer profiles, compliance calendars, and the accumulated intelligence of serving 650,000+ businesses.

Each layer multiplies the effectiveness of the layers below it. A better model with a poor harness and no context will underperform a good model with a well-designed harness and rich domain context. This is why Cortex, with its deep harness engineering and Australian legal context, can outperform general-purpose agents running on more capable models.

Why This Matters for Domain-Specific AI

The harness engineering movement is largely driven by the software engineering use case: coding agents that write, test, and deploy code. SWE-Agent, LangChain’s TerminalBench, OpenAI’s Codex. These are impressive achievements, but they operate in a domain where verification is relatively straightforward. Code either compiles or it doesn’t. Tests either pass or they don’t.

Professional services, and legal services in particular, present a harder problem. Verification is nuanced. Correctness depends on jurisdiction, recency of legislation, business structure, industry context, and dozens of other variables. A response that’s accurate for a Victorian sole trader may be wrong for a Queensland company. Guidance that was correct last month may be outdated after a legislative change.

This is where domain-specific harness engineering becomes critical. The harness doesn’t just route requests and manage tools. It encodes professional judgement into architectural constraints:

-

Professional boundaries are enforced at the prompt level, not as suggestions but as hard constraints with explicit priority ordering. User safety and professional boundaries always override helpfulness, which always overrides commercial considerations.

-

Complexity classification evaluates every interaction and identifies when a matter requires human professional judgement rather than AI-generated guidance. The system escalates with full context, not as a failure, but as a designed handoff.

-

Temporal awareness is built into every interaction. The harness knows the current financial year, upcoming quarterly deadlines, which regulatory changes are active versus upcoming, and what “this year” means when a business owner asks about tax obligations in July versus January.

-

Continuous knowledge ingestion keeps the knowledge layer current. Our automated pipeline scrapes authoritative Australian sources weekly, extracts structured insights, embeds them with domain-specific legal embeddings, and upserts to the knowledge base with deduplication. The harness ensures Atlas answers reflect current law, not stale training data.

General-purpose agent frameworks can’t encode this level of domain awareness. This is the moat that harness engineering creates for domain-specific applications.

What Comes Next

The convergence around harness engineering is accelerating. Anthropic has published detailed guidance on harness design for long-running applications, describing multi-agent architectures where planner, generator, and evaluator agents collaborate on complex tasks over multi-hour sessions. Karpathy has coined “agentic engineering” as the successor to vibe coding: a discipline requiring skilled practitioners, not just better models.

We see three implications for what we’re building at Lawpath.

The model layer will continue to commoditise. New models are shipping monthly. Each generation narrows the gap with frontier capabilities while dropping in cost. Our multi-model orchestration means we benefit from every improvement without being locked to any single provider. The harness absorbs new models; the experience keeps improving.

Harness engineering will become a recognised discipline. The ad hoc patterns we developed through necessity, including prompt versioning, quality gates, progressive enrichment, and complexity-based routing, are now being codified as best practices. As the discipline matures, we expect to both contribute to and benefit from shared knowledge in the community.

Domain context will be the ultimate differentiator. As harness patterns become well-understood and models commoditise further, the lasting advantage will belong to systems with the deepest domain understanding. Two years of serving Australian small businesses, hundreds of thousands of interactions, a curated legal knowledge base, domain-specific embeddings, fine-tuned models trained on real business conversations: this accumulated context is not something a general-purpose agent can replicate by reading documentation.

The Validation

When we built Cortex, we made a bet: that the value of an AI system lies not in the model it uses, but in everything surrounding it. The prompt assembly, the tool orchestration, the quality verification, the memory systems, the domain knowledge, the professional guardrails. We built these because the domain demanded it, because accuracy in legal services is non-negotiable, and because small business owners deserve AI that genuinely understands their situation rather than generating plausible-sounding generalities.

The industry is now arriving at the same conclusion through a different path. OpenClaw proved that a model-agnostic harness could become the most popular AI project on GitHub. LangChain proved that improving only the harness delivers larger gains than upgrading the model. Anthropic proved that context engineering is the foundation of reliable agents. SWE-Agent proved that interface design matters more than model capability.

We didn’t set out to build a harness. We set out to build something that actually works for Australian small businesses. But it turns out those are the same thing.

The model is not the agent. The harness is.

References

-

Trivedy, V. (2026). “The Anatomy of an Agent Harness.” LangChain Blog. blog.langchain.com

-

Anthropic. (2026). “Effective Context Engineering for AI Agents.” Anthropic Engineering. anthropic.com

-

Yang, J., et al. (2024). “SWE-agent: Agent-Computer Interfaces Enable Automated Software Engineering.” NeurIPS 2024. arxiv.org/abs/2405.15793

-

LangChain. (2026). “Improving Deep Agents with Harness Engineering.” LangChain Blog. blog.langchain.dev

-

Hajdrik, A. (2026). “OpenClaw Made Me Think About Harnesses, and Why They Matter.” Medium. medium.com

-

Karpathy, A. (2026). “Agentic Engineering Is the Next Big Thing.” Business Insider. businessinsider.com

-

Anthropic. (2026). “Harness Design for Long-Running Application Development.” Anthropic Engineering. anthropic.com

Lawpath Cortex is the agentic infrastructure behind Lawpath Atlas. For the engineering deep-dive, see The Engineering Patterns Behind Lawpath Atlas.