The Engineering Patterns Behind Lawpath Atlas

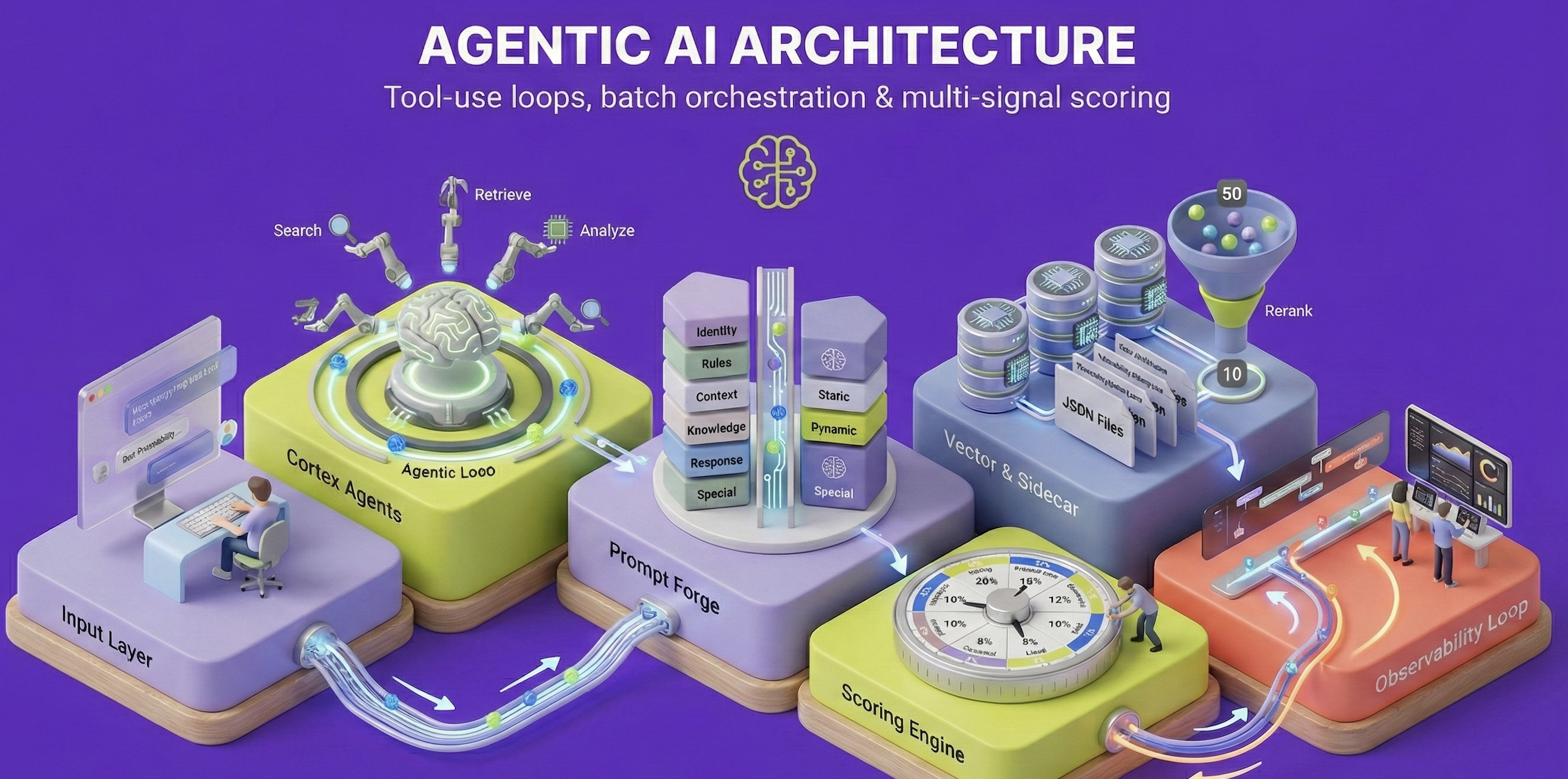

How we built the agentic infrastructure that powers Atlas: tool-use loops, domain-specific retrieval, real-time scoring, streaming agents, and the design principles that make proactive AI for small business possible.

At Lawpath, we set out to build something that didn’t exist yet: an AI operating system that works proactively for small businesses, finding problems before they happen rather than waiting to be asked. We call it Lawpath Atlas.

Building Atlas required solving a series of hard engineering problems. Generic chatbot architectures don’t work when accuracy is non-negotiable, when the AI needs to act autonomously, and when the domain is as specialised as Australian law and accounting. Over the past two years, we’ve developed a set of patterns that form the backbone of our AI infrastructure: Lawpath Cortex.

This post is the engineering companion to our Atlas vision post. Where that post explains what Atlas does for business owners, this one explains how we built it.

Cortex comprises two core subsystems:

- Cortex Agents: the agentic layer that powers user-facing interactions, autonomous research, and advisor copilots

- Cortex Signals: the intelligence layer that processes events, computes scores, and drives proactive recommendations

The Research That Shaped Our Approach

Before diving into implementation, it’s worth understanding the research that informed our thinking.

Andrew Ng’s widely-cited work identifies four foundational patterns for agentic systems: Reflection, Tool Use, Planning, and Multi-Agent Collaboration. His key insight is that “agentic” exists on a spectrum, from low autonomy (predetermined steps) to high autonomy (self-designed workflows). For legal applications, we deliberately operate in the middle: structured enough for reliability, flexible enough for complex queries.

The ReAct framework (Yao et al., ICLR 2023) established the dominant paradigm for combining reasoning with action. ReAct interleaves “thought” steps with “action” steps, creating interpretable execution traces. On knowledge-intensive tasks, ReAct significantly outperformed both pure reasoning and pure action approaches by grounding each step in observable results.

“Reasoning traces help the model induce, track, and update action plans as well as handle exceptions, while actions allow it to interface with external sources to gather additional information.” arxiv.org/abs/2210.03629

For self-correction, Madaan et al.’s Self-Refine (NeurIPS 2023) demonstrated that iterative feedback loops improve output quality by approximately 20% across diverse tasks, without additional training. The pattern is simple: generate, critique, refine, repeat.

These foundations inform every pattern in Cortex and, by extension, every interaction a business owner has with Atlas.

1. The Tool-Use Agentic Loop

The foundation of Cortex Agents is the classic agentic loop: the LLM iteratively calls tools until it has enough information to respond. This directly implements the ReAct pattern, interleaving reasoning with action.

User Query -> LLM -> Tool Call -> Execute -> Observe -> LLM -> ... -> Final ResponseWhen a business owner asks Atlas about hiring their first employee, the agent doesn’t just generate text from its training data. It calls a document search tool to find the relevant employment agreement template, checks whether the user has already created one, searches for the latest award rates, and synthesises all of this into a response grounded in current, verified information.

The agent processes responses in a multi-round loop, handling tool calls until it has gathered sufficient context:

while (continueProcessing) {

for await (const chunk of stream) {

if (chunk.type === 'tool_call') {

const result = await executeToolCall(chunk);

messages.push(formatToolResult(result));

}

}

if (hasToolCalls) {

currentMessages = formatMessagesWithToolResults(...);

} else break;

}This recursive structure mirrors what the ReAct paper describes as “dynamic reasoning to create, maintain, and adjust high-level plans for acting.” Each tool result becomes an observation that informs the next reasoning step.

Web Search with Quality Gates

One of our most critical tools is web search. Following the Self-Refine principle of iterative improvement, we use a three-phase pattern with quality gates:

- Query Analysis: A lightweight model classifies intent and selects relevant domains

- Domain-Filtered Search: Search API with Australian legal and government domains prioritised

- Quality Gate + Fallback: If results don’t meet thresholds, retry without domain filters

private assessQuality(result: InternalSearchResult): QualityAssessment {

const passed =

citationCount >= 2 &&

contentLength >= 300 &&

(hasAuthoritativeSources || citationCount >= 3);

return { passed, citationCount, hasAuthoritativeSources };

}The domain registry maintains tiered authority: .gov.au sources rank highest, followed by legal publishers, then general sources. This ensures Atlas cites authoritative Australian legal sources rather than generic web content.

Why this matters for Atlas: When a business owner asks about a recent legislative change, the quality gate ensures they get an answer grounded in government and legal publisher sources, not blog posts or forum threads. Andrew Ng emphasises that reflection with external feedback dramatically outperforms pure self-reflection. Our quality gates provide that external signal.

2. Domain-Specific Retrieval: Beyond Generic Embeddings

A critical component of our agentic infrastructure is semantic retrieval from our proprietary legal knowledge corpus. This is where generic approaches fall over, and where Atlas’s accuracy advantage originates.

Standard embedding models struggle with legal text because, from a general language perspective, legal jargon appears relatively similar. A contract termination clause and a contract renewal clause might have high cosine similarity despite being semantically opposite. We use domain-specific legal embeddings optimised for Australian legal and accounting content, which significantly outperform standard models at disambiguating relevant legal text.

The input_type parameter is crucial: we use 'document' when indexing and 'query' when searching. This asymmetric embedding approach improves retrieval quality by optimising each vector for its role.

Two-Stage Retrieval with Reranking

Cosine similarity provides a good first-pass ranking, but for legal content we add a reranking stage that provides more accurate relevance scoring:

Query -> Embedding -> Vector Search (top 50) -> Rerank (top 10) -> Final ResultsThis two-stage approach reduces irrelevant material in top results by approximately 25% compared to embedding similarity alone, while using 1/3 of the embedding dimensionality for storage efficiency.

Faceted Customer Narratives

Beyond legal knowledge, we store faceted narratives about each customer in the vector database. These narratives are what make Atlas feel like it knows your business:

| Facet | Content |

|---|---|

| Plans | Subscription history and current plan details |

| Documents | Document usage and contract types |

| Activity | Engagement patterns and recent actions |

| Calls | Advisory consultation history |

| Compliance | ASIC filings and compliance status |

| Formations | Company formation details |

Each facet is embedded separately, enabling semantic search across customer context. When Atlas needs to understand a customer’s compliance history, it retrieves the compliance narrative, not a raw database dump. This is how Atlas connects a question you ask today to a document you created six months ago.

3. Prompt Forge: The Architecture Behind Atlas’s Personality

As our agents grew, so did our system prompts. A single agent prompt exceeded 8,000 tokens. Managing this complexity required structure, especially because Atlas’s personality, knowledge, and professional boundaries all need to remain consistent across every interaction.

We built Prompt Forge to construct prompts from standardised sections:

| Section | Purpose |

|---|---|

| Soul & Identity | Core values, professional boundaries, and persona |

| Critical Rules | Non-negotiable constraints and escalation logic |

| User Context | Dynamic per-user business profile and entitlements |

| Knowledge Base | Platform documentation, pricing, and legal content |

| Response Framework | Output formatting and quality guidelines |

| Special Handling | Edge cases, out-of-scope topics, and safety |

We use markers to separate static (cacheable) from dynamic content:

[[LP:STATIC_START]]

... cacheable system prompt (identity, rules, knowledge) ...

[[LP:STATIC_END]]

[[LP:DYNAMIC_START]]

<user_context>{{user_context}}</user_context>

<pre_response_checklist>{{pre_response_checklist}}</pre_response_checklist>

[[LP:DYNAMIC_END]]Why the separation matters: prompt caching provides significant cost reduction for static content. By explicitly marking boundaries, we ensure cache hits on the expensive system prompt while injecting fresh user context per request. The builder publishes compiled prompts to object storage with version tracking, giving us Git-like history for prompts. This is critical when debugging why an agent’s behaviour changed.

How this powers Atlas: Every interaction assembles a tailored prompt from these sections. The static layers (legal knowledge, behavioural rules, professional boundaries) are cached for performance. The dynamic layers (your specific business profile, your compliance status, today’s date and its implications) are rebuilt fresh. This is how Atlas knows your industry, your structure, and your entitlements without you having to explain them each time.

4. Progressive Due Diligence: Research Agents

One of the patterns that most directly shapes the Atlas experience is what we call progressive due diligence: autonomous research agents that build a profile of each business before they ever interact with Atlas.

When a new user joins Lawpath, an event triggers a research agent that operates autonomously:

- Multi-source intelligence: The agent queries web intelligence services, company validation APIs, and public registries to gather information about the business

- Structured extraction: Using AI tool-use, the agent classifies the business by industry (ANZSIC codes), identifies the business structure, resolves location, and infers likely compliance needs

- Profile persistence: The structured profile is saved and becomes the seed for the customer 360

// Research agent uses tool-use to build a structured profile

const tools = [{

name: 'save_user_profile',

description: 'Save structured profile from research findings',

input_schema: {

type: 'object',

properties: {

industry: { type: 'string' },

subIndustry: { type: 'string' },

businessType: { type: 'string' },

location: { type: 'object' },

likelyNeeds: { type: 'array' },

},

},

}];This is what a good professional does before a first meeting: homework. Atlas automates it for every customer, at scale.

For existing users, a separate enrichment agent re-runs periodically, updating the profile with new public information and platform activity. The system also ingests insights from advisory calls (both phone and video consultations), extracting structured knowledge from transcripts and feeding it back into the customer 360.

5. Real-Time Intelligence: The Signals Engine

Cortex Signals is the real-time customer intelligence engine that makes Atlas proactive rather than reactive. It processes roughly a million events per day through a seven-stage enrichment pipeline, maintaining a continuously updated intelligence layer for every customer.

Signal Categories

The engine computes multiple signal categories in real-time:

| Signal Category | Purpose |

|---|---|

| Churn Probability Index | Risk scoring using a 9-component ensemble |

| Product Qualification | Conversion likelihood based on behavioural patterns |

| Next Best Action | AI-generated recommendations for each customer |

| Service Propensity | Which services (legal, accounting, compliance) the customer likely needs |

| Conversion Signals | High-intent events accumulated over time |

Multi-Signal Scoring

Each score combines weighted signals from multiple data sources. Our Churn Probability Index uses a 9-component ensemble, a design informed by research showing that ensemble approaches consistently outperform single-signal models:

| Component | Weight | What It Measures |

|---|---|---|

| Billing | 28% | Payment health, failed charges, arrears |

| Engagement | 18% | Login frequency and recency |

| Behavioural | 12% | Product usage and value realisation |

| RFM | 10% | Recency-Frequency-Monetary segmentation |

| Trend | 8% | Engagement direction over time |

| Seasonal | 8% | Expected activity for the Australian calendar |

| Lifecycle | 6% | Customer age and maturity |

| Intent | 6% | Cancellation signals |

| Health | 4% | Positive indicators (inverted) |

Adaptive Scoring

The engine adapts to context in ways that generic systems cannot:

- Seasonal adjustment: Reduced engagement scoring during Australian quiet periods (Dec 15 to Jan 15). Without this, we’d flag every customer as high churn risk during Christmas, generating false positives that erode trust in the system.

- Service-type awareness: Virtual office customers have different engagement expectations than legal advice subscribers

- Sigmoid calibration: Raw scores are calibrated to true probabilities

- Value at Risk: High-value customers get priority intervention recommendations, combining estimated lifetime value with churn probability

- Decay functions: Older signals contribute less than recent ones

How this powers Atlas: These scores run invisibly behind every Atlas interaction. When Atlas proactively recommends that you book a compliance review, or surfaces a deadline you haven’t thought about yet, it’s because the signals engine has identified a pattern that warrants attention. The business owner sees a helpful recommendation; underneath, nine scoring components agreed it was time to act.

6. Batch Orchestration and Unified Enrichment

For non-real-time workloads, we use a batch orchestration pattern that generates multiple outputs in a single AI call per user.

Queues (PQL, Churn, Vector) -> Merge by userId -> Build Context -> Batch API -> Fan OutThis architecture emerged from a practical problem: we were making separate AI calls for sales briefs, churn summaries, and vector narratives. Three calls per user, duplicating context loading each time. The unified enrichment system merges these into one, building a comprehensive context per user (documents, consultations, AI interactions, formations) and producing all outputs in a single batch call.

Cost impact: Batch processing with prompt caching reduced our per-user enrichment cost by approximately 60% compared to individual real-time calls.

The batch system also handles our automated knowledge ingestion. A weekly worker scrapes authoritative Australian tax and legal publications, extracts structured knowledge using AI, embeds each insight using legal-optimised embeddings, and upserts to our vector knowledge base with deduplication and merge logic. This is how Atlas stays current on legislative changes, ATO focus areas, and regulatory updates without manual intervention.

7. Streaming Agents and the Advisor Copilot

For real-time conversations, we stream responses with Server-Sent Events, providing a responsive experience even when the agent is performing complex reasoning:

responseStream.write(`data: ${JSON.stringify({ type: 'start' })}\n\n`);

for await (const event of cortexStream) {

if (event.type === 'content_delta') {

responseStream.write(`data: ${JSON.stringify({

type: 'content',

content: event.delta.text,

})}\n\n`);

}

}

responseStream.write(`data: ${JSON.stringify({ type: 'complete' })}\n\n`);We track performance markers throughout the stream: time-to-first-token, thinking duration, and search latency. These metrics drive continuous optimisation.

The Advisor Copilot

One of the more sophisticated applications of the streaming agent pattern is the Advisor Copilot: a real-time AI assistant that runs alongside human advisors during consultations.

Before the call, the system pre-compiles a comprehensive briefing from the customer’s profile, document history, consultation history, and recent AI interactions. During the call, the copilot can search across thousands of past advisory transcripts, surface relevant precedents, and assist the advisor in real time.

After the call, a post-call pipeline:

- Transcribes and summarises the consultation

- Extracts structured insights and follow-up actions

- Feeds everything back into the customer 360

- Generates follow-up recommendations via the next-best-action engine

This closed loop (AI research -> human consultation -> AI-enriched follow-up) is what makes Atlas compound in value over time. Every interaction, whether with Atlas or with a human expert, makes all future interactions better.

8. Memory, Observability, and Continuous Improvement

Our memory and observability layer forms a closed loop: conversations are stored, traced, scored, and fed back into model improvement. This implements the Self-Refine principle at the system level.

Observability Tracing

Every agent interaction is traced end-to-end. Within each trace, we create generations for LLM calls and spans for tool executions. This gives visibility into token usage, tool latency, streaming performance, and cost attribution by user, session, and prompt version.

Feedback Loops and Fine-Tuning

User feedback flows back into improvement:

User Feedback -> Trace Score -> Dataset Export -> Fine-Tuning PipelineExplicit feedback: Users rate responses, attaching scores to traces.

Implicit signals: Did the user ask a follow-up? (suggests incomplete answer) Did they copy the response? (suggests useful content) Did they book a consultation after? (suggests high-value interaction)

High-scored traces form the basis of our fine-tuning dataset. We filter by quality, curate for diversity and coverage, remove PII, and train domain-specific models that bake in the response style, Australian English conventions, and professional guardrails that define Atlas’s personality. This closes the loop: user interactions generate traces, traces are scored, high-quality examples become training data, improved models serve future users.

9. The Sidecar Pattern: Progressive Signal Accumulation

One of our most impactful patterns is the User Sidecar Store: a per-user data structure that accumulates signals progressively without requiring schema changes to our primary datastore.

The sidecar holds multiple data sections, each updated independently:

| Section | TTL | Purpose |

|---|---|---|

| Engagement Cache | 12h | Platform activity metrics |

| Conversion Signals | Permanent | Accumulated high-intent events |

| PQL Data | 24h | AI-generated propensity scores |

| Churn Data | 24h | Risk drivers, scoring, RFM segments |

| Document Context | 2h | Temporary storage until batch processes |

| Watermarks | Permanent | Processing state to prevent duplicates |

The key insight: each handler updates only its section, merging with existing data:

export async function saveSidecar(

userId: string,

updates: Partial<UserSidecar>

): Promise<void> {

const existing = await getSidecar(userId);

const sidecar: UserSidecar = {

...existing,

...updates,

userId,

updatedAt: new Date().toISOString(),

watermarks: {

...existing?.watermarks,

...updates.watermarks,

},

};

await storage.put(getSidecarKey(userId), sidecar);

}This pattern solves several problems:

Schema Evolution: Adding new AI enrichment fields doesn’t require database migrations. We add fields to the sidecar and start writing immediately.

Progressive Accumulation: Conversion signals accumulate over time. When a user views pricing, that signal is preserved as newer events arrive.

Decoupled Processing: Real-time handlers save context to the sidecar; batch workers read it later. This decoupling prevents real-time paths from blocking on expensive AI operations.

How this powers Atlas: The sidecar is how Atlas remembers that you viewed pricing last week, created a contractor agreement yesterday, and asked about GST this morning. Each event is captured independently by different handlers, but the sidecar unifies them into a coherent picture that the agent draws on for your next interaction.

Design Principles

Several principles guide Cortex architecture and, by extension, how Atlas behaves:

Accuracy over speed: In professional services, a confident wrong answer is worse than a slower right one. Every pattern includes validation gates. We’d rather skip a low-confidence intervention than send something wrong.

Prompt caching for cost efficiency: Static system prompts are cached at the LLM provider level. The cost difference is substantial. Batch processing with caching can be 60%+ cheaper.

Seasonal awareness: Australian business has distinct quiet periods. Our scoring models adjust, preventing false-positive churn alerts during holidays when reduced engagement is expected.

Progressive enrichment: Rather than trying to know everything at once, we build understanding incrementally. Research agents start the profile; platform activity enriches it; advisory calls deepen it; batch enrichment synthesises it.

Observability as a first-class concern: Distributed tracing, structured logging, and team notifications let us monitor agent behaviour in production. When something goes wrong, we can trace exactly which tool returned bad data or which prompt version caused the regression.

References

-

Yao, S., et al. (2023). “ReAct: Synergizing Reasoning and Acting in Language Models.” ICLR 2023. arxiv.org/abs/2210.03629

-

Madaan, A., et al. (2023). “Self-Refine: Iterative Refinement with Self-Feedback.” NeurIPS 2023. arxiv.org/abs/2303.17651

-

Ng, A. (2024). “Four Design Patterns for AI Agentic Workflows.” DeepLearning.AI. deeplearning.ai/courses/agentic-ai

-

Microsoft Azure. (2025). “Agent Factory: The New Era of Agentic AI.” azure.microsoft.com

These patterns are the engineering foundation that makes Lawpath Atlas possible. Each solves a specific problem we encountered as we scaled Cortex from prototype to serving hundreds of thousands of Australian businesses. Individually, they’re established patterns adapted for our domain. Together, they create something genuinely new: an AI operating system that works proactively for small business, finding problems before they happen so owners can focus on what they actually set out to do.

The Lawpath Engineering Team